hi all,

noticed this thread about the effective clock, got some questions or maybe im misreading it, i do a manual overclock to 4,7; no c-states, windows 10 power state bitsum (parkcontrol) power, all cores not parked, but somehow the effective clock is about 50% lower than the actual speed, im a loosing performance somewhere?? when i use the stress test Occt al cores ramp up to 100% and effective clock is than actually correct, 4,7ghz, the question comes around with my simracing, very cpu demanding, on the Gpu i have still 50% headroom in the performance app of Oculus rift, makes me thinking is my cpu than that slow ?? 8700K @ 4,7 on all cores

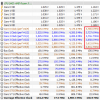

attachement was while in Assetto corsa with 24 AI cars

thx in advance